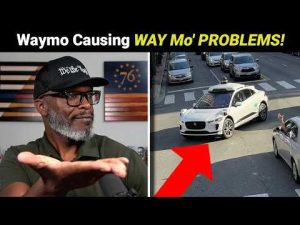

The rise of autonomous vehicles, like the ones from Waymo, has sparked a significant amount of public concern and curiosity. There’s a sense of excitement about the possibilities these vehicles hold, but also trepidation about the real-world execution. The allure of driverless technology stems from the promise of reducing human error on the roads, but recent incidents suggest we’re not quite there yet. Consider the reports of these autonomous cars performing erratic maneuvers, such as getting stuck at railroad crossings or venturing into active emergency scenes. These are alarming instances where the technology seems not just lacking, but potentially dangerous.

Some may argue that this technology is a step in the right direction. They hope that these vehicles could eventually make roads safer and commuting more efficient. However, what happens when the technology makes a mistake? The question of accountability looms large. If a self-driving car does something illegal or unsafe, who bears the responsibility? Traditional traffic laws assume a human driver’s presence to enforce accountability. Yet, with no one behind the wheel, enforcing these laws becomes a vexing issue.

There is speculation about the extent of their autonomy. However, these vehicles operate primarily via onboard systems and are not controlled remotely from distant locations like the Philippines. This speculative notion raises questions about the legitimacy of current safety claims from companies like Waymo. Could there be a human manually stepping in when the system falters, or are the supposed ‘autonomous’ vehicles truly at the whim of their own programming glitches? It’s clear that a simple technological hiccup could have potentially disastrous consequences on the streets.

It’s also worth noting the inconvenience caused by these vehicles when they encounter complex situations. Imagine being stuck behind an autonomous car that’s hesitating to make a turn in busy traffic. Impatience and frustration are bound to mount, not just for the car occupants but also for other drivers. In this technological blend of caution and uncertainty, one wonders if this truly represents the future we want. Consumers unwittingly become beta testers each time they opt for a ride in one of these vehicles, subjected to real-world trial and error.

In light of these challenges, perhaps the rush towards autonomous vehicles should pause for a more measured approach. It might be wise to refine this technology further within controlled environments before unleashing it onto public streets. The emphasis should remain on protecting public safety and ensuring that technological advancements do not outpace our ability to manage them responsibly. While the promise of self-driving cars is alluring, common-sense solutions must prevail to mitigate risks, ensuring these vehicles earn trust through proven reliability rather than hopeful speculation.